Document grouping

Experience Optimizer lets you define categories and group documents that are relevant to each other.

- Block or boost. For example, promote a new document group to the top of the results for greater visibility.

- Apply rules individually to documents within a group. For example, boost ballet-related documents to the top of the group if a specific user searches for ballet dance contest.

Best practices

These parameters group documents, and are defined in the Additional Query Parameters Stage:| Parameter Name | Parameter Value | Update Policy |

|---|---|---|

| group | true | default |

| group.format | grouped | default |

| group.ngroups | true | default |

| group.field | style_id_s | default |

- Add a Query Pipeline Stage

- Additional Query Parameters Stage

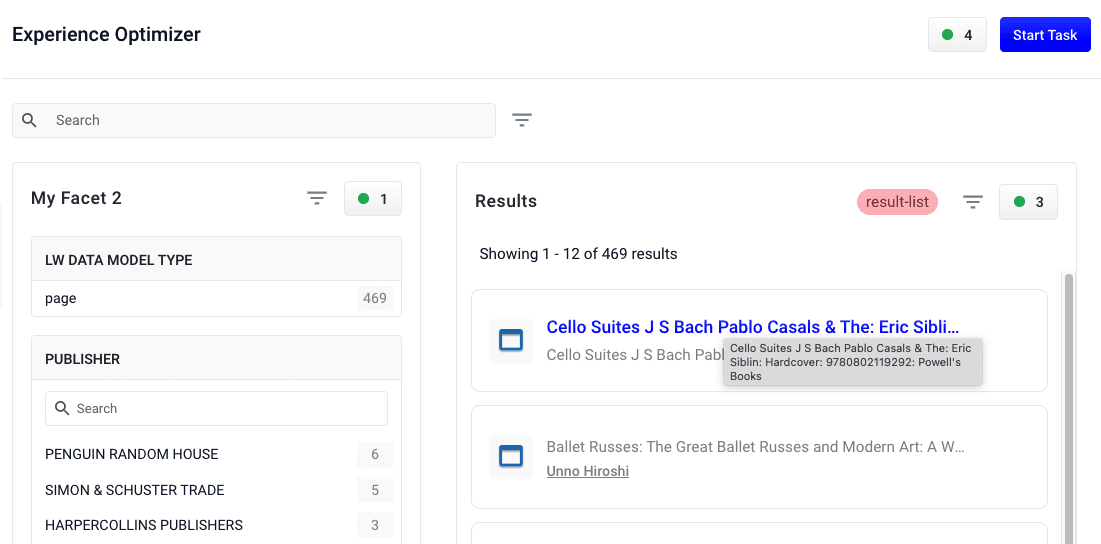

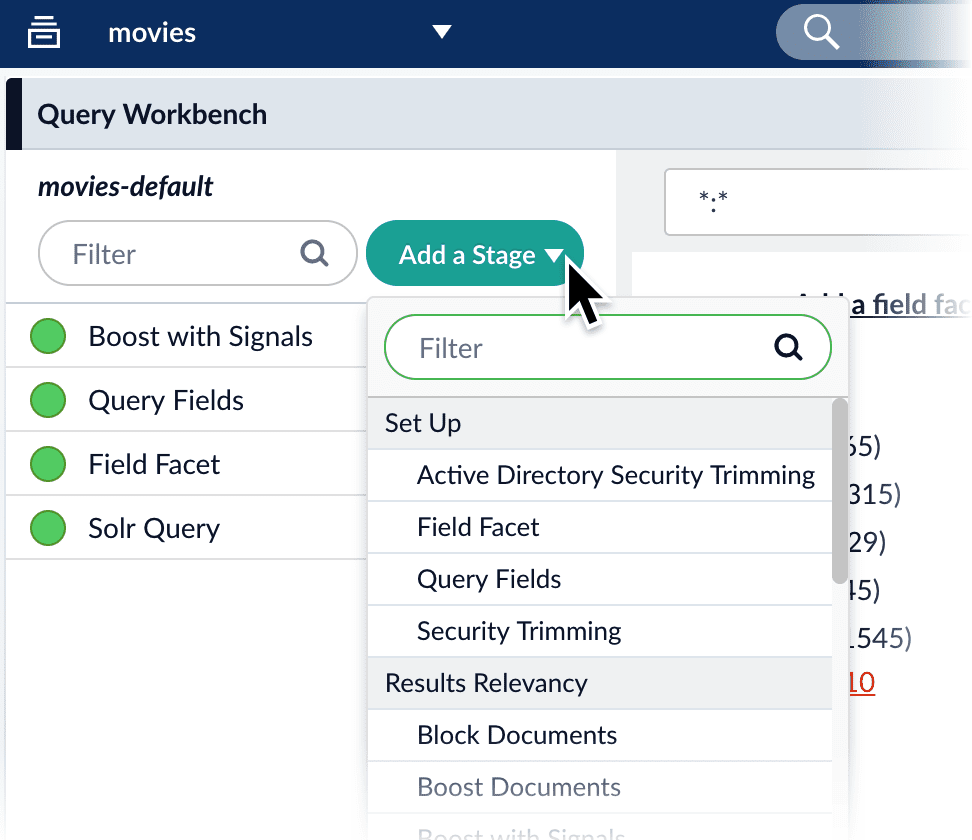

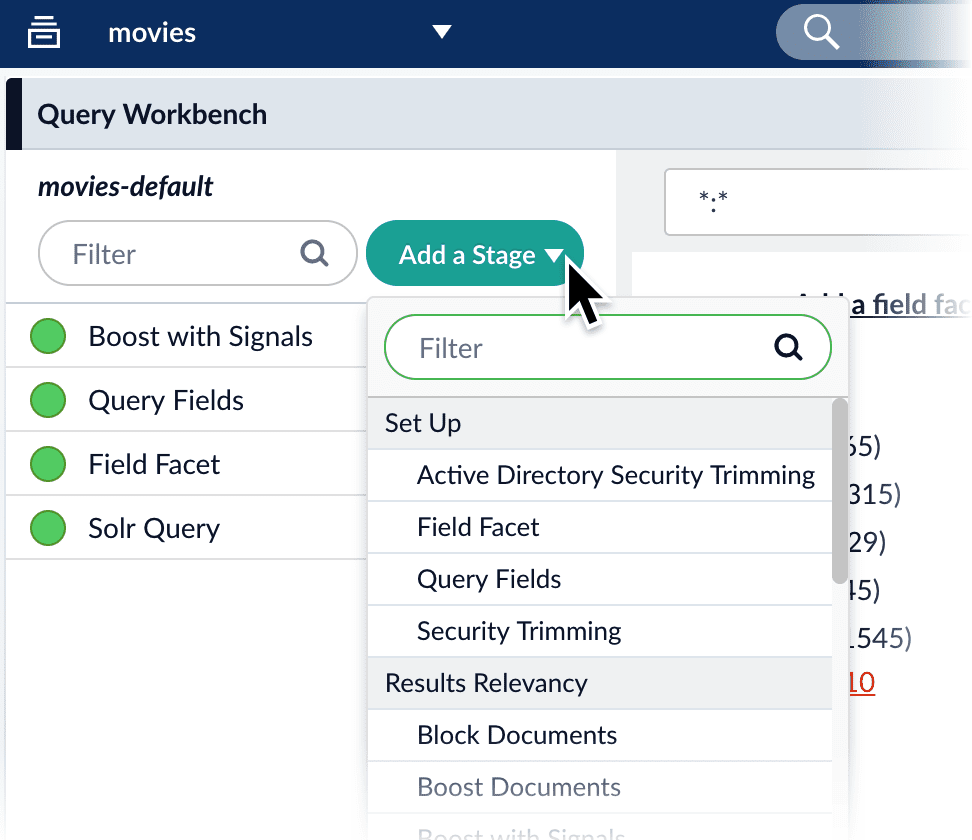

Add a Query Pipeline Stage

Add a Query Pipeline Stage

In the Query Workbench, click Add a Stage to add query pipeline stages that can perform query setup, results relevancy, troubleshooting, and more.

Collapse/Expand parser grouping

The Collapse/Expand parser allows grouping with the following parameters:| Parameter Name | Parameter Value |

|---|---|

| expand | true |

| enableElevation | true |

| group | false |

See Collapse and Expand Results for more information.

Search rewrites

Search rewriting lets you modify search queries to more accurately reflect the intentions of your customers. These functions let you:- Create search rewrites manually.

- Edit, test, review and publish the search rewrites generated automatically from signals data.

- Head/Tail - improves poorly performing searches.

- Misspelling detection - corrects common spelling mistakes.

- Phrase detection - identifies products with matching phrases.

- Synonym detection - includes alternative words with the same meaning.

Use Experience Optimizer query rewrites

Use Experience Optimizer query rewrites

You can create query rewrite rules in Experience Optimizer. This is similar to using the Rules Editor to create query rewrite rules, except there are additional options in Experience Optimizer.

- In Managed Fusion, navigate to Relevance > Rules > Optimizer.

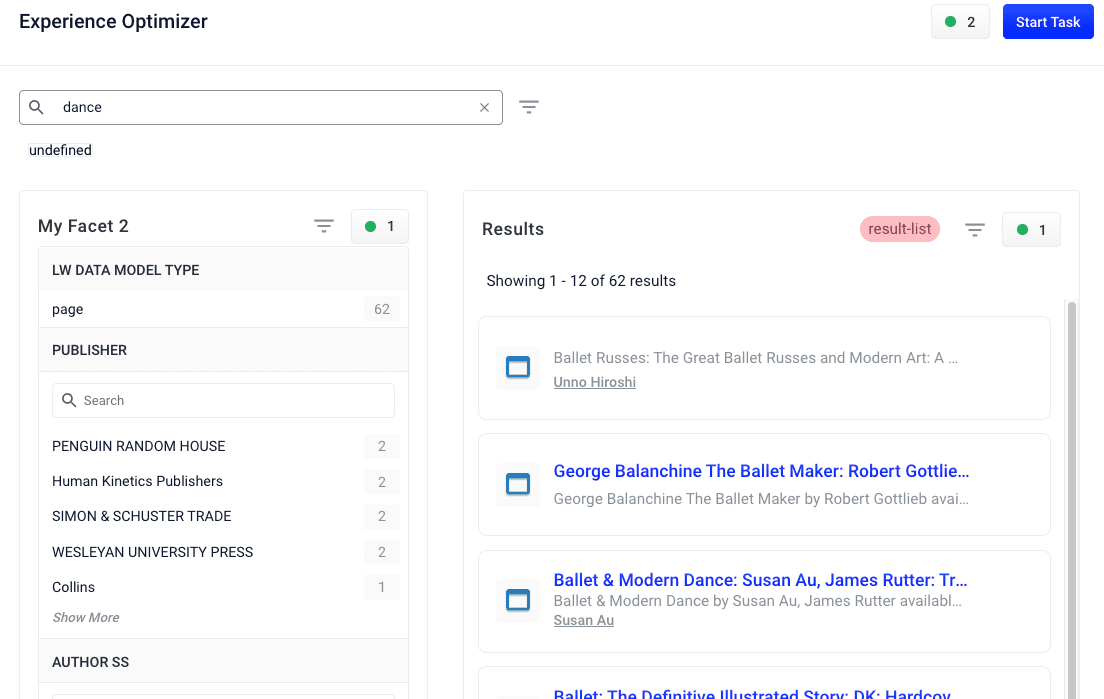

- Enter a search term or phrase in the search bar.

- Click Start Task.

- Hover your cursor next to the query. A + button will appear:

- Click the + button. A list of query rewrites options will appear: Head/Tail, Misspelling, Phrase, Synonym, and Remove Words.

- Click on the type of rule you’d like to configure.

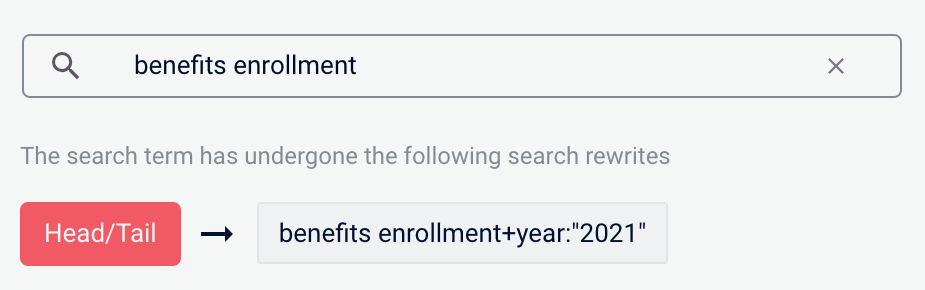

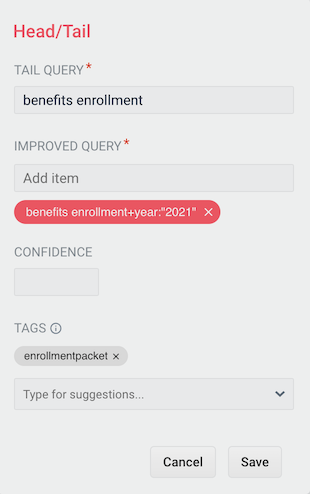

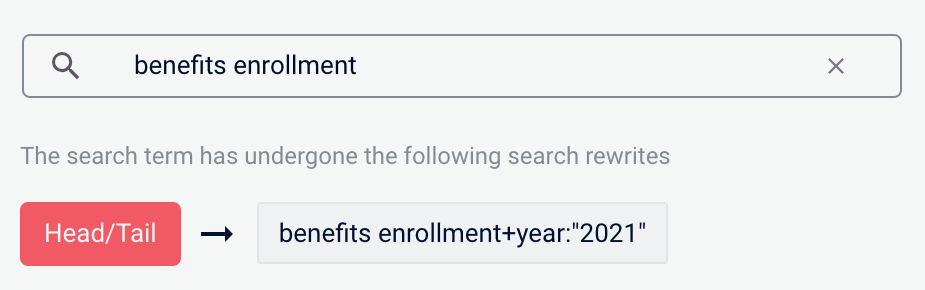

Head/Tail

You can create a Head/Tail rewrite to improve search results using methods other than correcting for misspellings or synonym expansion. When a poorly defined search term is identified, the original term is replaced by an improved search term.For example, a search forbenefits enrollment could be improved by using the search term benefits enrollment+year:"2021" (in this case making use of the year field in the data).Most Head/Tail rewrites are typically created automatically via machine learning. However, if desired, custom rewrites can be manually created using the following steps.-

From the list of query rewrite options, select Head/Tail. A form will appear:

Parameter Description Example Value Tail Query The tail query itself. benefits enrollmentImproved Query The query that will replace the tail query phrase. benefits enrollment+year:"2021"Tags Optional metadata tags that can be used to identify and organize rewrites. enrollmentpacket - Enter one or more improved search terms in the Improved Query field.

- Click the Save button.

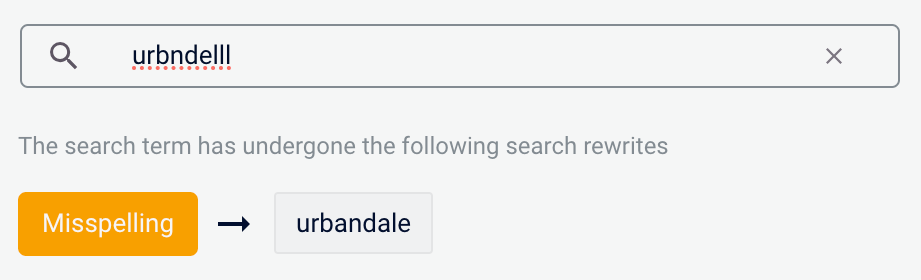

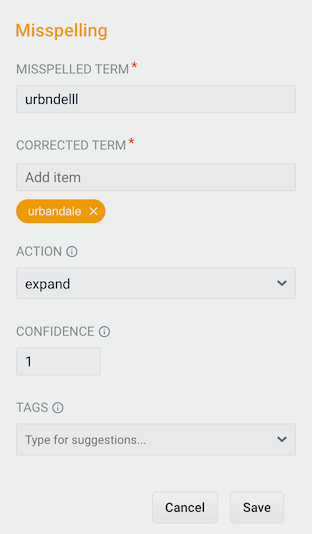

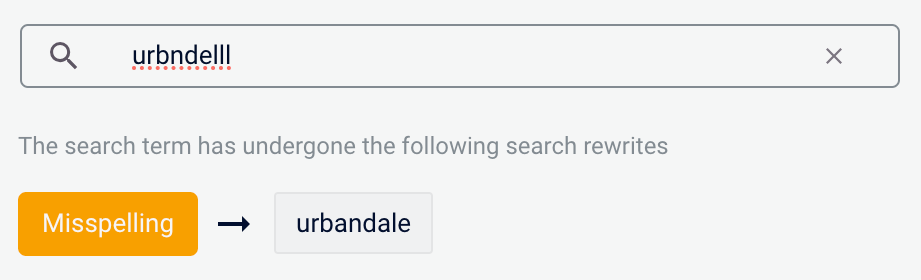

Misspelling

You can create a misspelling query rewrite to detect and correct common spelling mistakes. When a customer enters a search term containing a known misspelling, the incorrect spelling is replaced with the spelling correction.For example, if your clients frequently misspell or mistype the wordquestionnaire as questionare, you can set up a query rewrite to automatically correct it.-

From the list of query rewrite options, select Misspelling. A form will appear:

Parameter Description Example Value Misspelled Term The phrase itself. questionareCorrected Term The term that will replace the misspelled term. questionnaireAction Action to perform. expandConfidence Confidence score from the phrase job. A confidence level of 1represents 100% confidence. For rules created automatically via machine learning, the confidence level reflects the output from the machine learning model.1Tags Optional metadata tags that can be used to identify and organize rewrites. enrollmentpacket - Enter one or more spelling corrections in the Corrected Term field.

- Click the Save button.

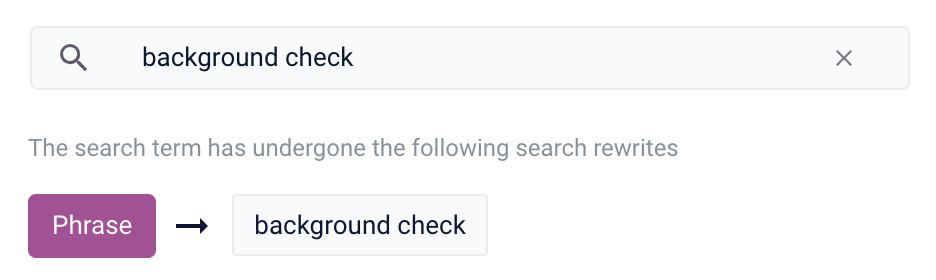

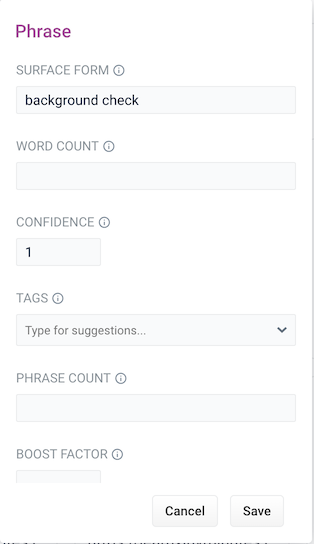

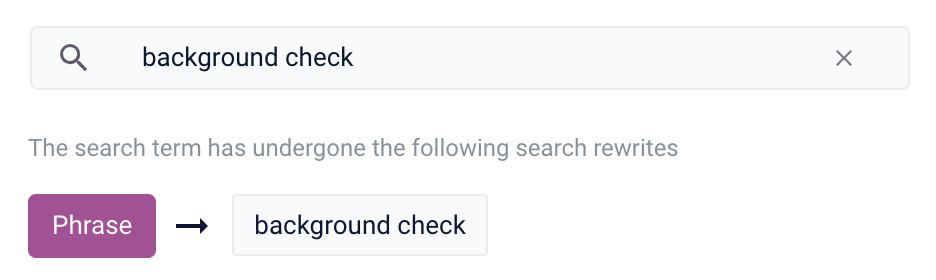

Phrase

You can use query rewriting to identify phrases used in search terms so that products with matching phrases are boosted in the search results. This is helpful when users do not use quotation marks to identify phrases in their search terms.For example, without phrase detection a search for the wordsbackground check would show results for both background and check. With phrase detection, this search would correctly boost results for "background check".-

From the list of query rewrite options, select Phrase. A form will appear:

Parameter Description Example Value Surface Form The phrase itself. background checkWord Count Indicates how many words are included in the phrase. 2Confidence Confidence score from the phrase job. A confidence level of 1represents 100% confidence. For rules created automatically via machine learning, the confidence level will reflect the output from the machine learning model.1Tags Optional metadata tags that can be used to identify and organize rewrites. enrollmentpacketPhrase Count Denotes how many times this phrase was found in the source. This value is automatically set via machine learning. It does not need to be set manually. 5Boost Factor The factor to use to boost this phrase in matching queries. 2.0Slop Factor Phrase slop, or the distance between the terms of the query while still considering it a phrase match. 10 - Enter the number of words in the phrase in the Word Count field.

- Click the Save button.

Synonym

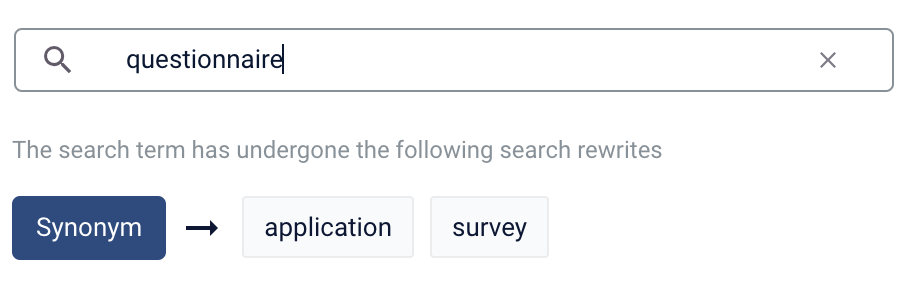

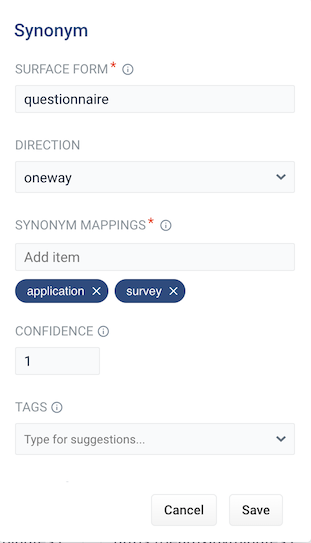

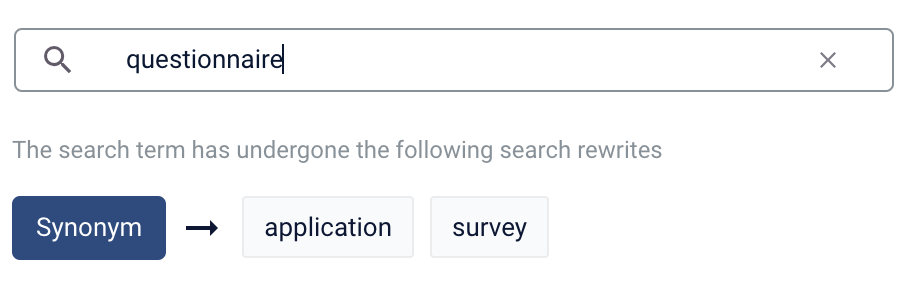

You can specify synonyms for a specified search term so that alternative words with the same meaning are automatically used in the search query. When a customer enters a search term with a synonym match, the alternative words are used instead of, or in addition to, the original search term.For example, the search termquestionnaire could have the synonyms application and survey.-

From the list of query rewrite options, select Synonym. A form will appear:

Parameter Description Example Value Surface Form The term that has synonyms. questionnaireDirection With a oneway search, the original search term is replaced by the synonym. In the preceding example, questionnairewould be replaced by the alternative wordsapplicationandsurvey. With a symmetric search, the search query is expanded to include the original term and the synonyms, resulting in a greater number of potential hits. In the preceding example, this time the query would includequestionnaire,application, andsurvey.symmetricSynonym Mappings Synonyms for the surface form. application,survey`Confidence Confidence score from the phrase job. A confidence level of 1represents 100% confidence. For rules created automatically via machine learning, the confidence level will reflect the output from the machine learning model.1Tags Optional metadata tags that can be used to identify and organize rewrites. enrollmentpacketCount How many times this term occurred in the signal data when it was discovered. This value is optional when a rewrite is being defined manually. 5 - Choose whether the direction is oneway or symmetric.

- Enter one or more alternative words in the Synonym Mappings field.

- Click the Save button.

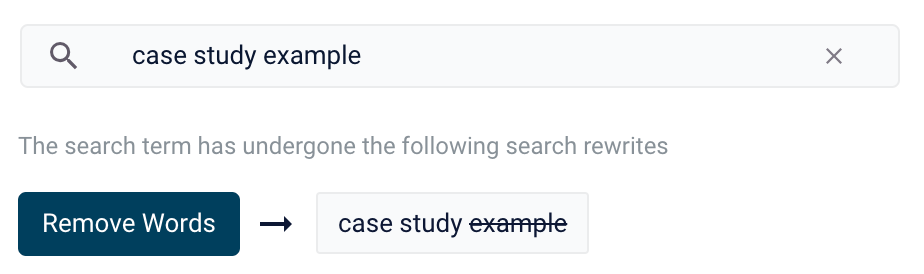

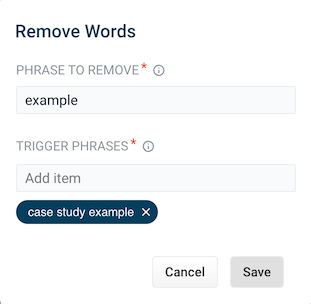

Remove Words

The Remove Words feature is available in Fusion 5.4 and later.

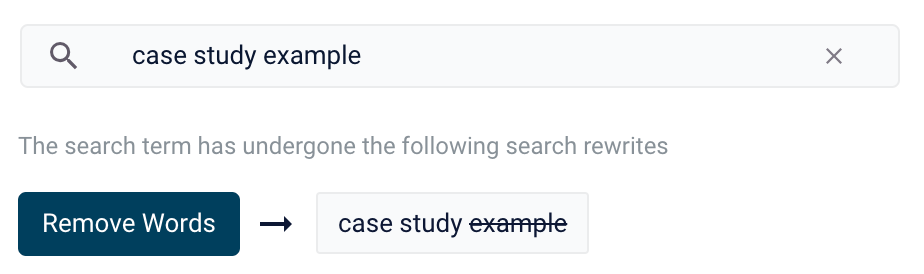

case study examples to remove examples and then display results for case study.-

From the list of query rewrite options, select Remove Words. A form appears:

- This form contains the following fields:

| Parameter | Description | Example Value |

|---|---|---|

| Phrase to remove | The words to remove from the trigger phrase. | examples |

| Trigger phrases | The query that prompts the removal of the phrase. The trigger phrase is not necessarily a complete query. If the query contains the trigger phrase, then Fusion removes the phrase in the Phrase to Remove field. | case study examples |

- Enter a phrase to remove and a trigger phrase. Note that the phrase to remove is auto-populated with the query.

- Click Save.

Experience Optimizer: Rewrites Manager

The course for Experience Optimizer: Rewrites Manager focuses on how to create search rewrites that optimize your user’s search results.

Optimize zero results

Search queries that return zero results can drive customers away from your site. You can set certain documents to display when a customer search generates zero results.Optimizing Zero Results

Optimizing Zero Results

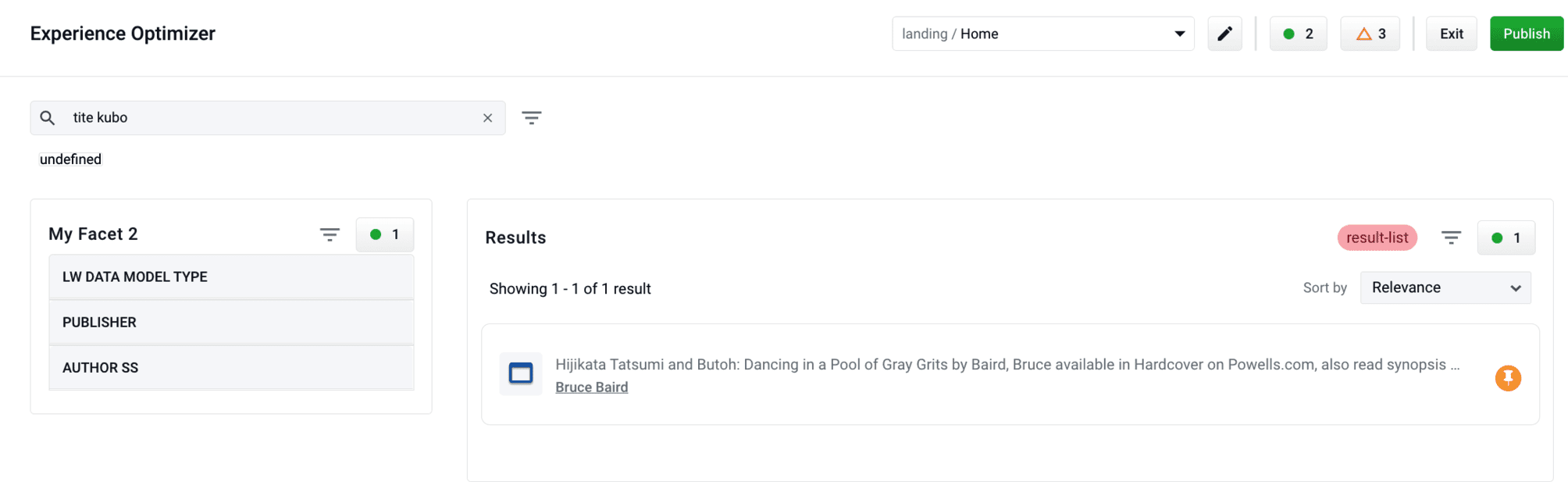

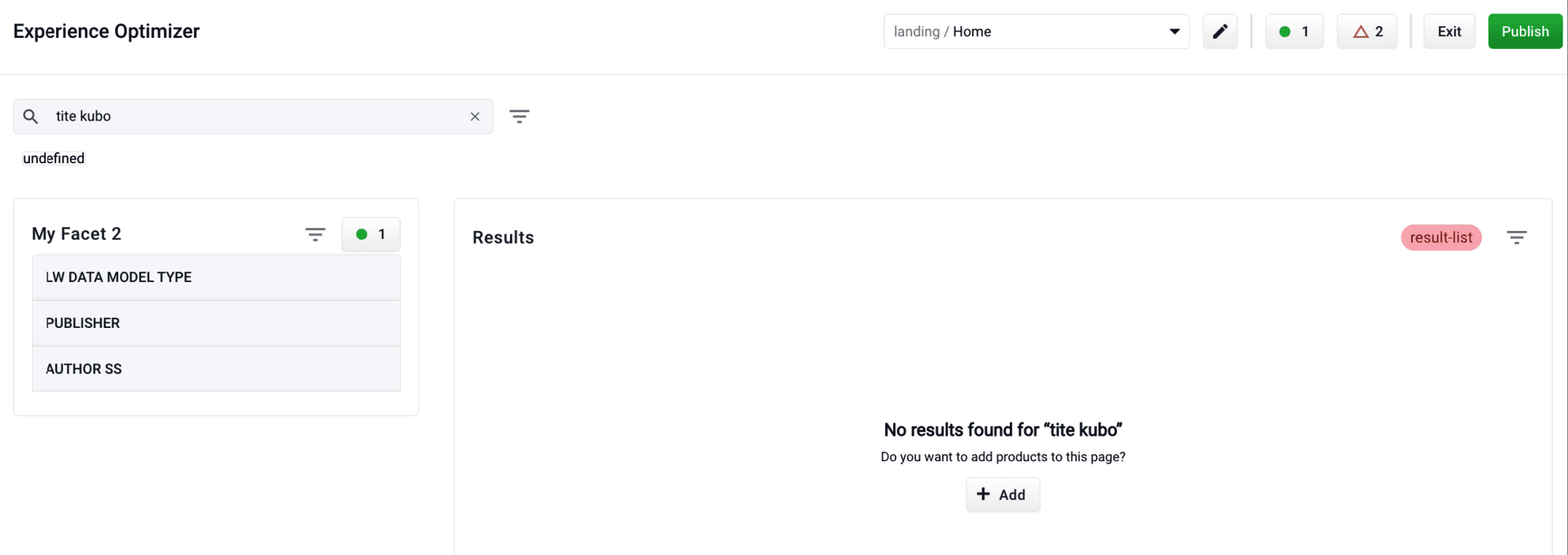

- Enter a search query the generates 0 results:

- Click the Add button:

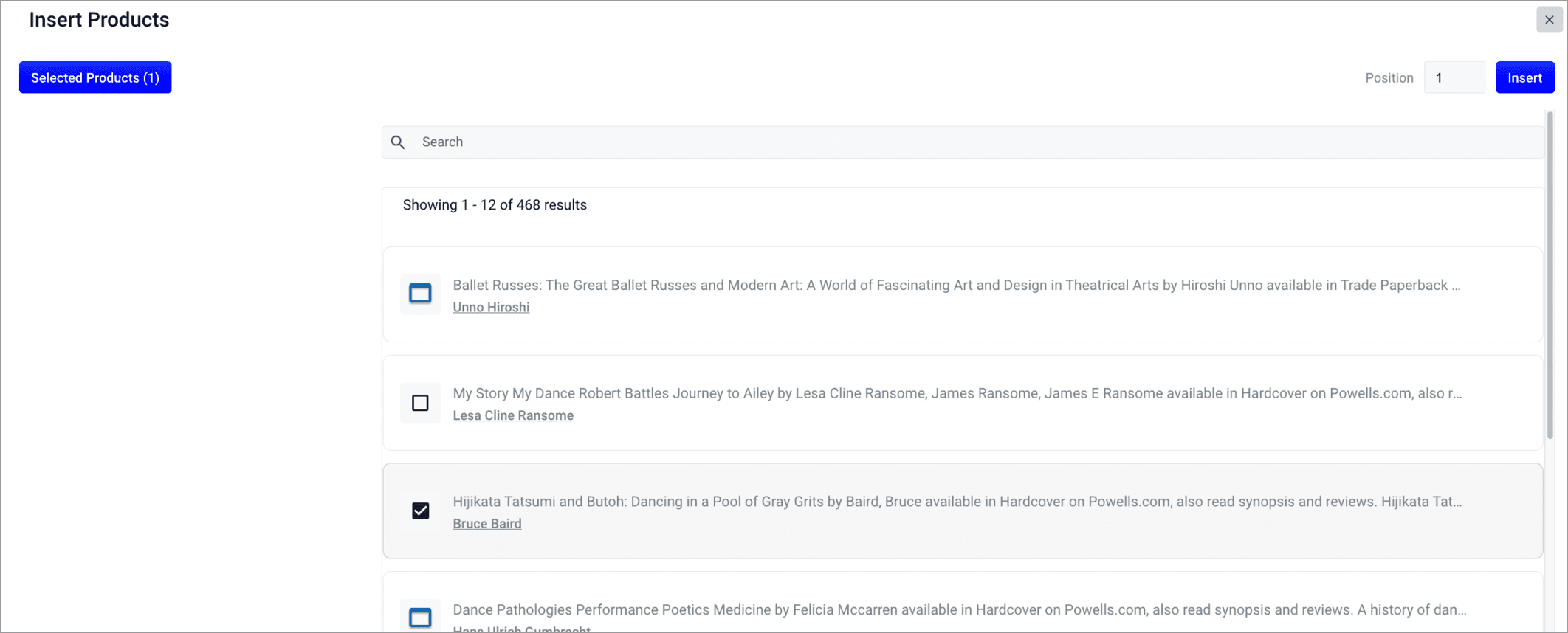

- Select documents or document groups that you want to associate with the 0 results query:

- Click the Insert button. The selected products are now displayed when the search query is used, resolving the 0 results query: